Abstract

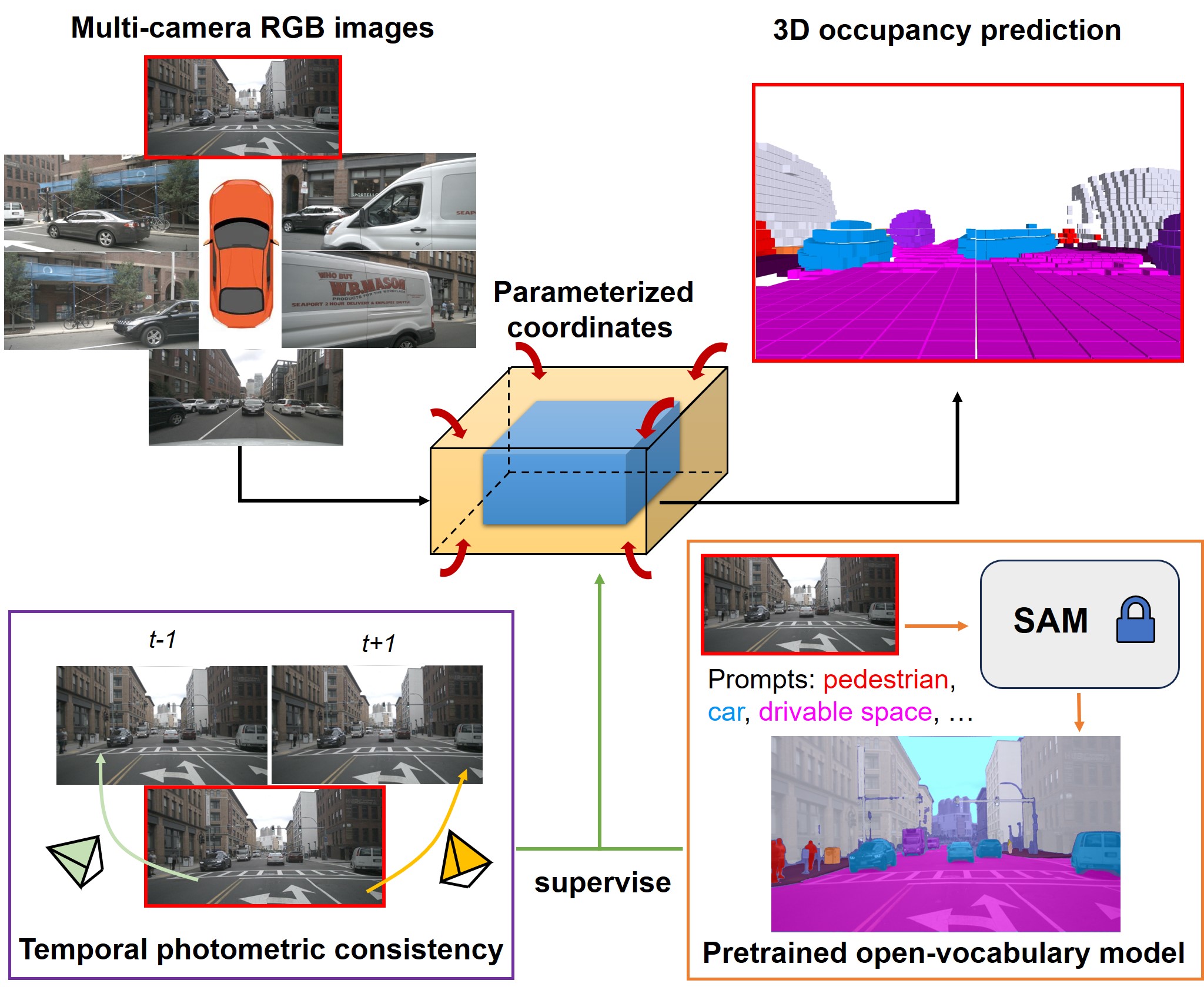

As a fundamental task of vision-based perception, 3D occupancy prediction reconstructs 3D structures of surrounding environments. It provides detailed information for autonomous driving planning and navigation. However, most existing methods heavily rely on the LiDAR point clouds to generate occupancy ground truth, which is not available in the vision-based system. In this paper, we propose an OccNeRF method for self-supervised multi-camera occupancy prediction. Different from bounded 3D occupancy labels, we need to consider unbounded scenes with raw image supervision. To solve the issue, we parameterize the reconstructed occupancy fields and reorganize the sampling strategy. The neural rendering is adopted to convert occupancy fields to multi-camera depth maps, supervised by multi-frame photometric consistency. Moreover, for semantic occupancy prediction, we design several strategies to polish the prompts and filter the outputs of a pretrained open-vocabulary 2D segmentation model. Extensive experiments for both self-supervised depth estimation and semantic occupancy prediction tasks on nuScenes dataset demonstrate the effectiveness of our method.

Method

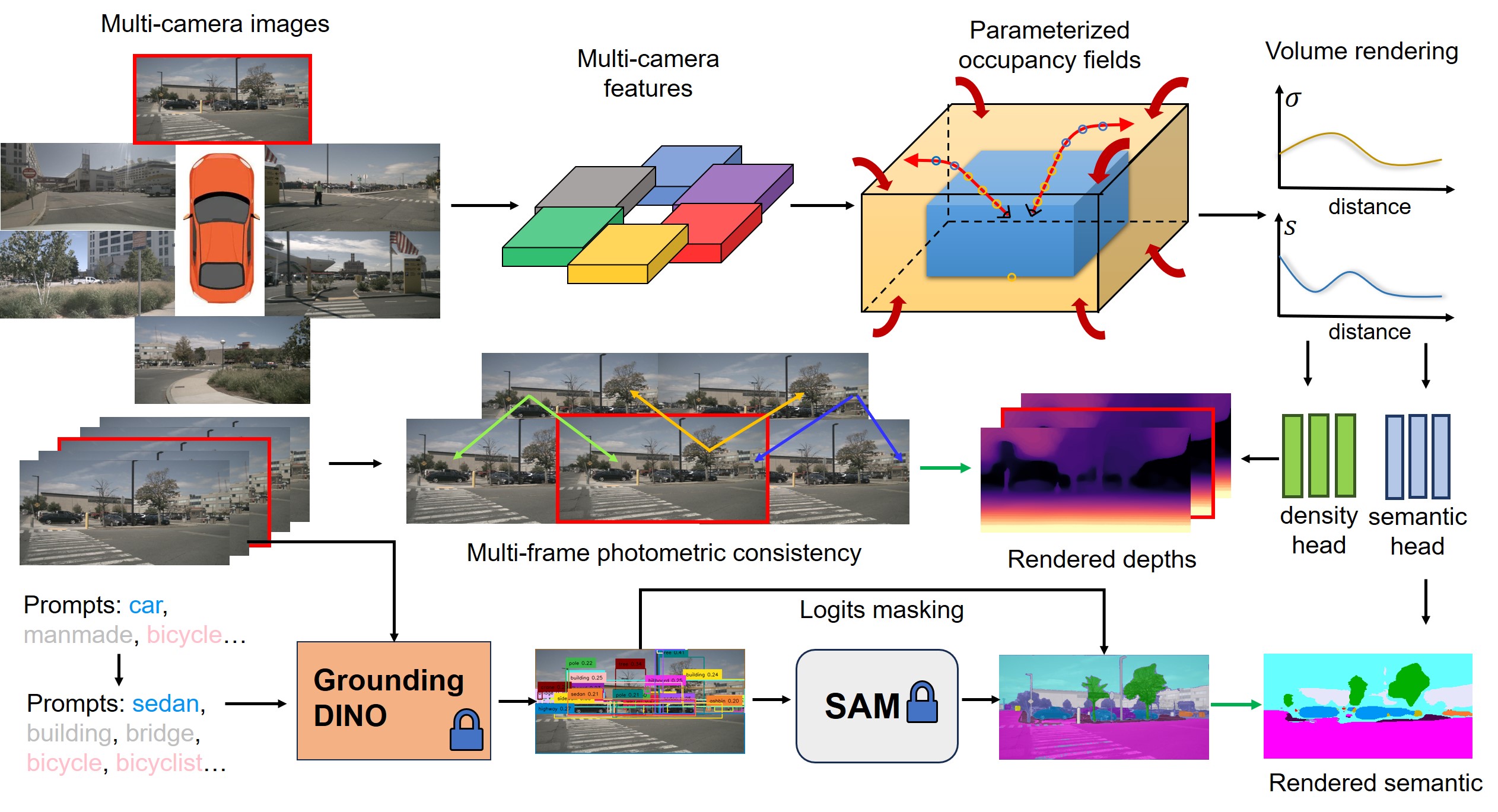

The picture below is a brief summary of our method. We first use a 2D backbone to extract multi-camera features, which are lifted to 3D space to get volume features with interpolation. The parameterized occupancy fields are reconstructed to describe unbounded scenes. To obtain the rendered depth and semantic maps, we perform volume rendering with our reorganized sampling strategy. The multi-frame depths are supervised by photometric loss. For semantic prediction, we adopted pretrained Grounded-SAM with prompts cleaning. The green arrow indicates supervision signals.

Results

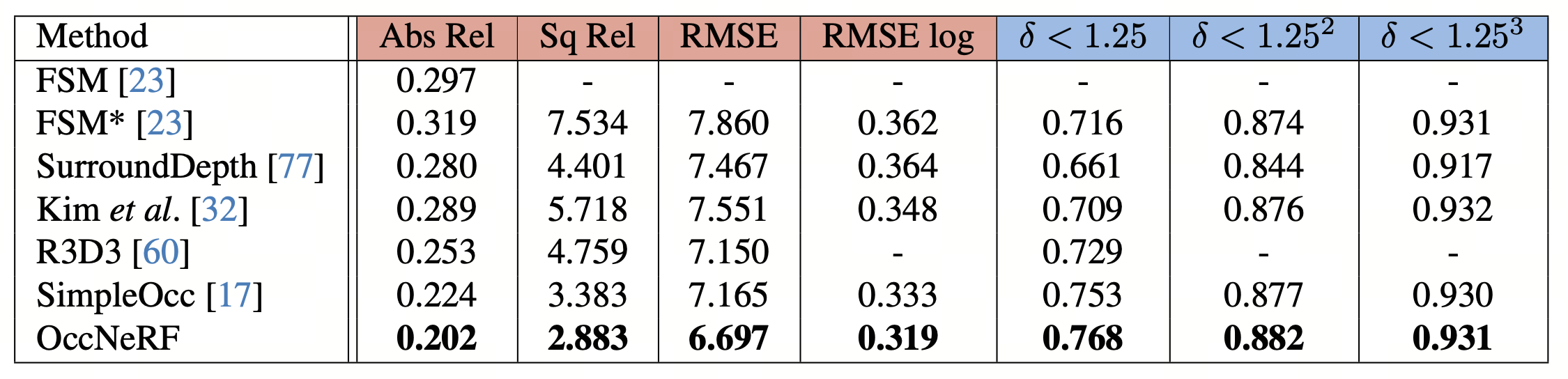

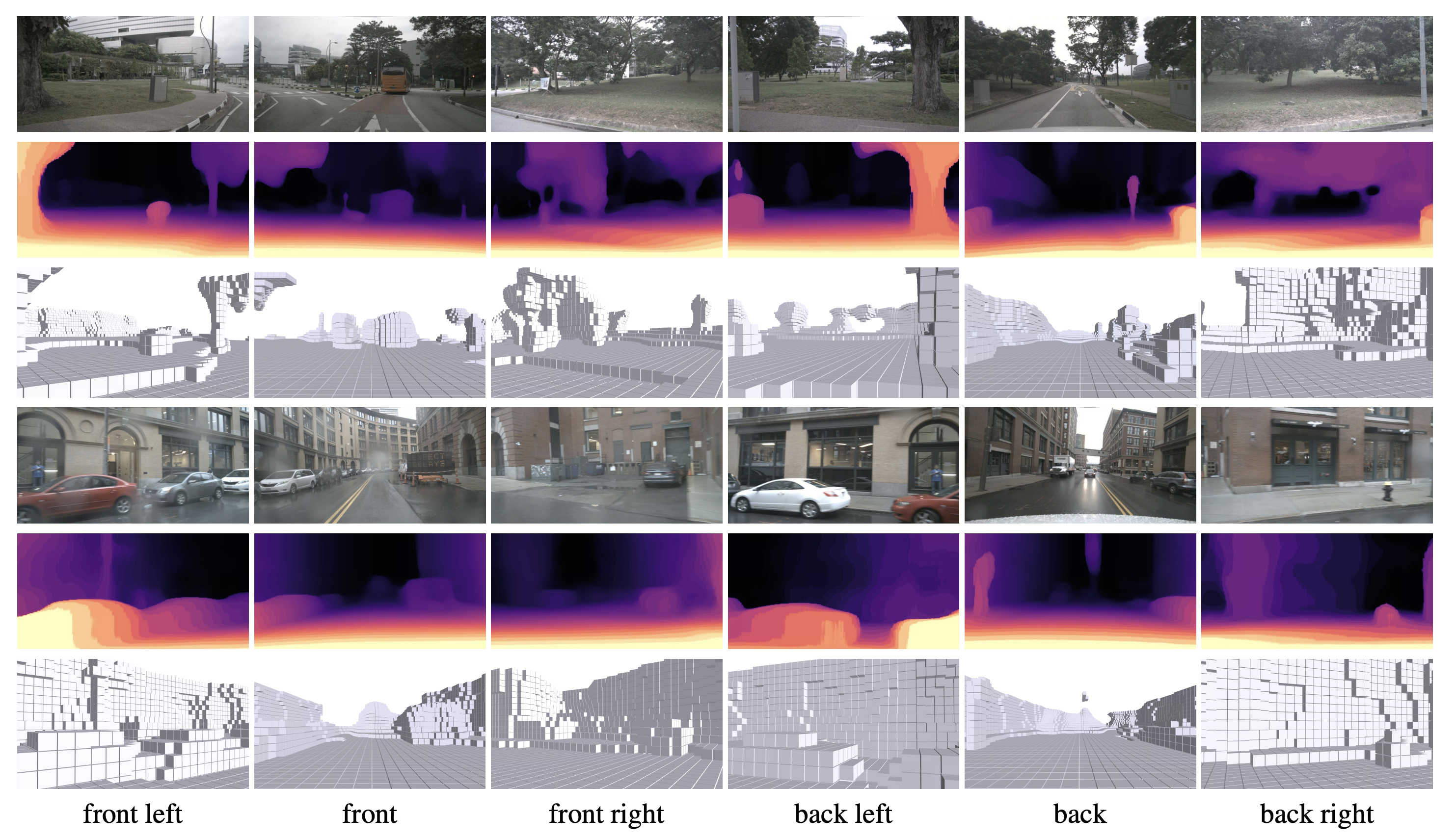

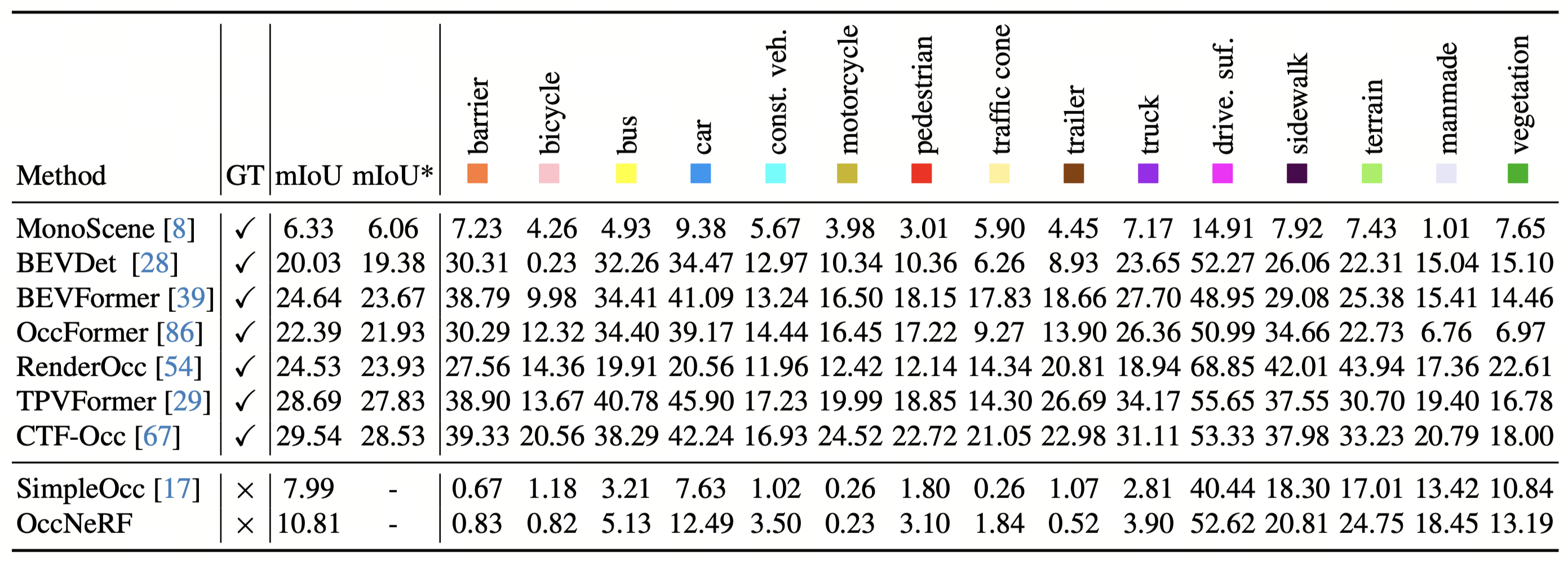

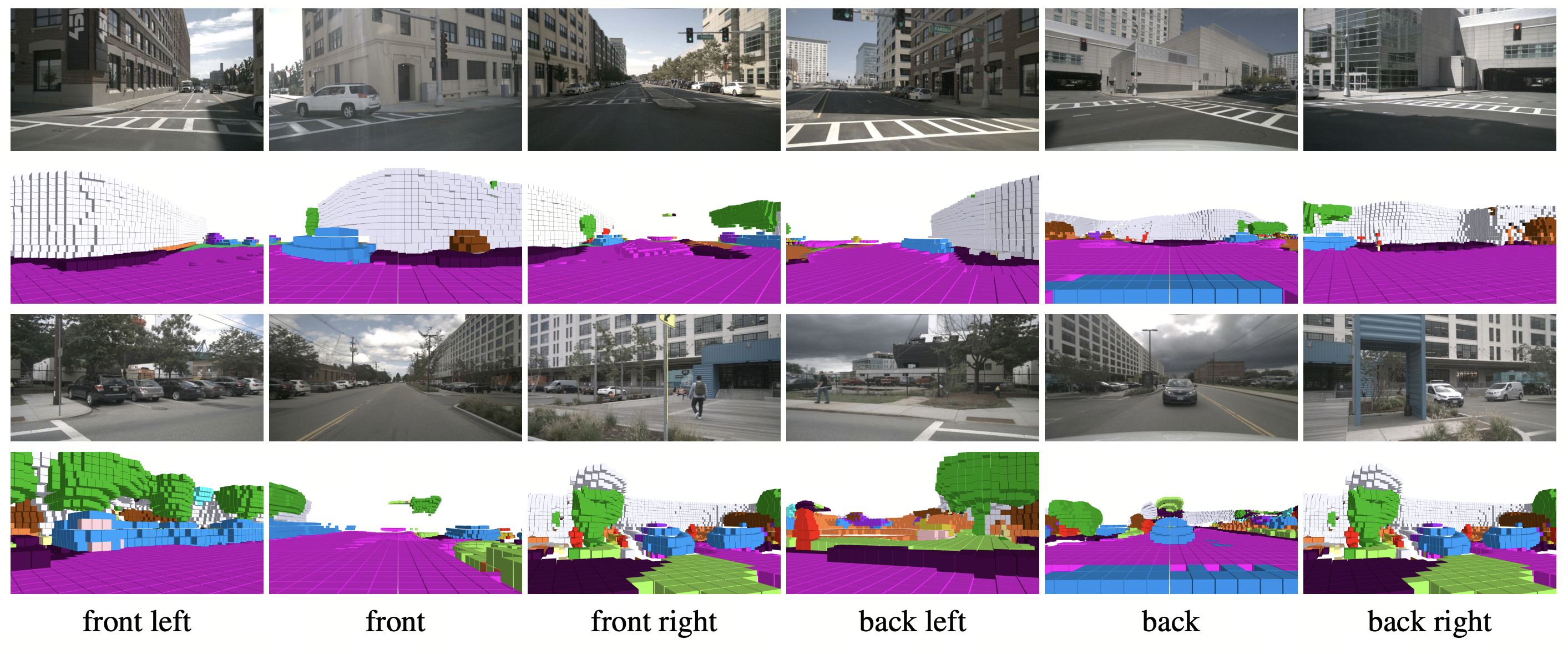

We conducted self-supervised multi-camera depth estimation and 3D occupancy prediction on nuScenes dataset. Our method does not need any 3D supervision in both tasks.

Depth Estimation

3D Occpancy Prediction

BibTeX

@article{chubin2023occnerf,

title = {OccNeRF: Self-Supervised Multi-Camera Occupancy Prediction with Neural Radiance Fields},

author = {Chubin Zhang and Juncheng Yan and Yi Wei and Jiaxin Li and Li Liu and Yansong Tang and Yueqi Duan and Jiwen Lu},

journal = {arXiv preprint arXiv:2312.09243},

year = {2023}

}